If you work in events, you've probably had this experience: you open LinkedIn, see three posts about AI transforming the industry, close the app, and go back to building your run-of-show in a spreadsheet.

The gap between the AI conversation and the AI reality in events is massive. And it's not because event professionals are behind. It's because the information is scattered across a dozen gated PDFs, paywalled analyst reports, and vendor blog posts that are more marketing than data.

We wanted to fix that.

Over the past few months, we read every major piece of research on AI adoption in events published in the last year. Forrester's B2B events surveys (both the 2025 baseline and Conrad Mills' March 2026 update). The PCMA AI Pulse Check. The Northstar Meetings Group / Cvent PULSE survey. The Amex GBT 2026 Global Meetings & Events Forecast. And Anthropic's research on how AI is actually being used across the labor market, which gave us the framework for the whole project.

Here's what we found.

AI is ready for most event workflows. Organizers are barely using it.

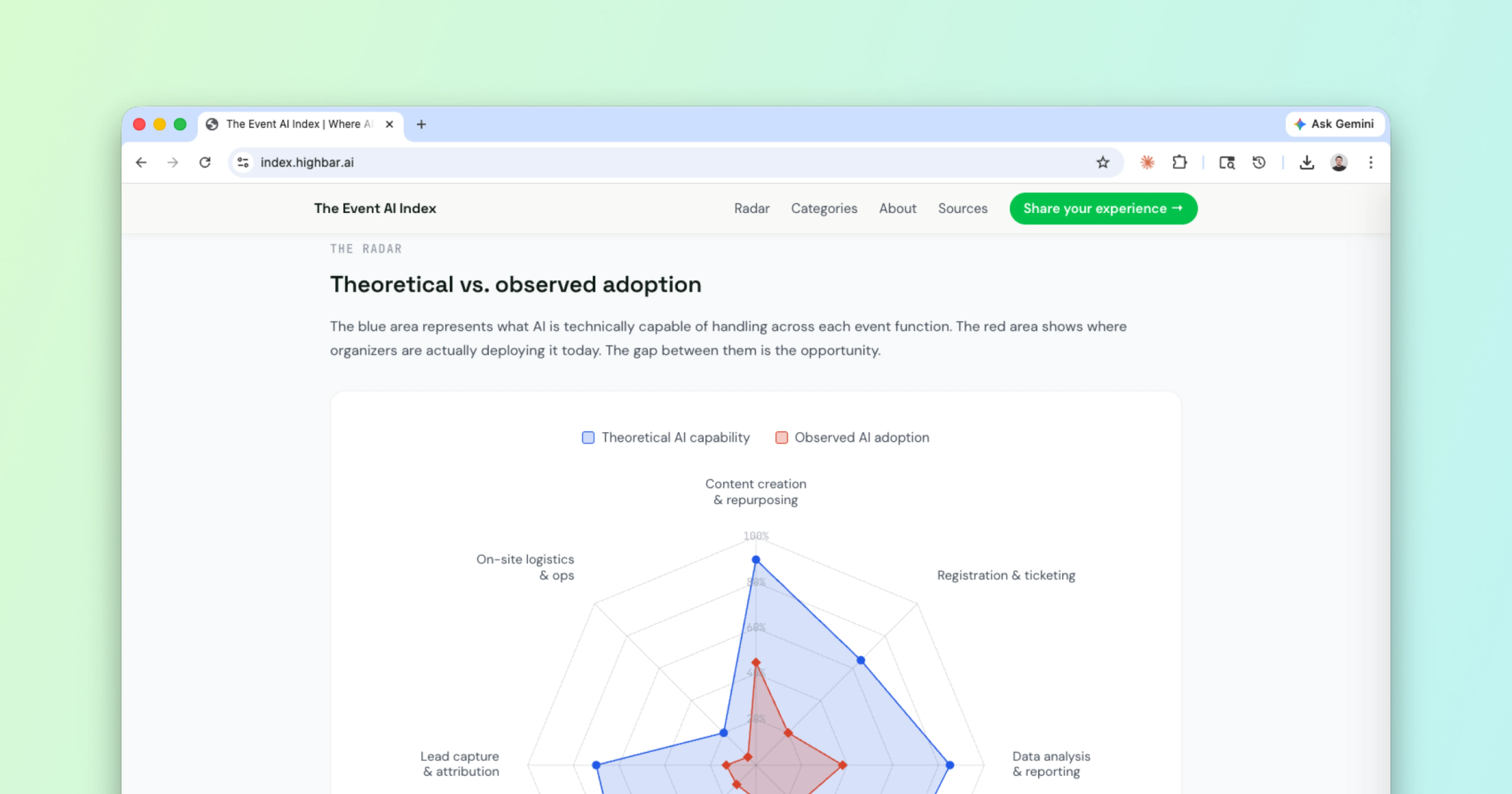

Across the 8 core event management functions we scored, AI can meaningfully augment or handle about 71% of the work today. Actual adoption sits around 22%. That's a 49-point gap.

But here's the thing the data also shows: this isn't a story about an industry that's failing. It's a story about an industry that's early.

The PCMA survey found that 91% of event professionals are using AI in some capacity. But 65% of them are what the researchers called the "middle majority," using it in fragmented, non-strategic ways. Content drafting here, a survey summary there. Not integrated into any workflow.

Forrester's updated numbers show real acceleration in specific areas. Content repurposing jumped from 21% to 43% year-over-year. Data analysis went from 23% to 40%. Those are significant moves.

Meanwhile, the Northstar/Cvent PULSE survey paints the broader picture: 65% of planners report using generative AI, but only 16% say it has significantly improved their planning and execution. Most people are experimenting. Few have made it structural.

The biggest gap isn't in content. It's in attendee-facing AI.

Content creation is where most people start, and adoption is the highest at 45%. That makes sense. It's asynchronous, low-risk, and human-reviewed before it goes live.

The largest gap is in attendee concierge: 80% theoretical capability, 12% actual usage. AI can answer attendee questions, handle wayfinding, and manage routine requests with high accuracy. But organizers are cautious about putting AI in front of attendees where a wrong answer is visible and immediate. That caution is rational, but platforms are adding source-grounding and fallback-to-human features that make deployment safer.

The most interesting gap is in lead capture and attribution. The bottleneck there isn't AI capability at all. It's that event data and CRM data rarely live in the same system. You can't score a lead with AI if the engagement signals never leave the event platform.

The pressure isn't going away.

Here's the context that makes all of this urgent: 60% of organizations report flat or declining event budgets. 71% expect costs to increase. For the first time since Covid, fewer than half of planners expect to produce more events this year than last.

88% of planners say their stakeholders view events as strategic investments. But only 32% say those stakeholders are willing to spend beyond inflation. Events are being asked to do more with the same (or less). AI is one of the few levers available.

So we built a tool to make sense of it.

That's why we built The Event AI Index. It takes all of this research, scores 8 event management functions on two dimensions (theoretical AI capability and actual adoption), and presents the gap visually.

The framework was inspired by Anthropic's recent research on AI's labor market impact, which maps the gap between what AI can theoretically do and what's actually being used across job functions. We thought that framework was too useful to stay in labor economics. So we applied it to events.

This is not a scorecard. It's a way to see where the biggest opportunities for exploration and experimentation are right now.

What we got wrong (probably)

The theoretical scores are our qualitative assessment. Reasonable people will disagree. That's why we built agree/disagree voting into every category card. If you think our score for networking is too high or our score for registration is too low, tell us. The index updates as the data does.

We also know this version has gaps. The sample sizes in some of the underlying surveys are modest. The Amex GBT data captures intent ("plan to use") rather than confirmed usage. We're transparent about all of this in the source cards.

What we're asking for

If you have research, data, or use cases we should know about, send them our way. The goal is to make this a living resource for anyone trying to figure out what's real and what's next for Events + AI.

Check it out: https://index.highbar.ai/